An Attribution Framework for Transparent Use of Generative AI in Universityoursework

Abstract

As the use of Generative Artificial Intelligence(AI) tools among university students for their coursework is prevalent, how and in what form the students should attribute the use of AI is an important topic to maintain academic integrity and transparency. Although there are active discussions on how AI use should be attributed for academic coursework, a gap exists between the current discourse on how to acknowledge the use of AI and how students are actually using AI for their studies. While the majority of guidelines suggest following formal citation styles such as APA and MLA, attributing the use of AI in the form of citation disregards the purpose of referencing a knowledge source and the diverse ways in which students use AI. We propose a Generative AI Attribution Framework for specifying what constitutes an AI use that should be attributed in students’ coursework. Our framework contributes to the current discussions of attributing the use of Generative AI in higher education by setting clear boundaries for its use cases.

초록

대학 교육에서 과제 수행 시 생성형 인공지능 도구 사용이 보편화됨에 따라, 학문적 진실성과 투명성을 유지하기 위해 학생들이 인공지능 사용을 어떻게 명시해야 하는지가 중요한 주제로 부각되고 있다. 학업 목적의 인공지능 사용 표기 방법에 대한 논의가 활발하게 이루어지고 있으나, 현재의 논의 내용과 실제 학생들의 인공지능 사용 방식 사이에는 여전히 큰 괴리가 존재한다. 대부분의 지침은 APA나 MLA와 같은 공식적인 인용 방식에 따르도록 권장하지만, 인공지능 사용을 단순히 인용(citation)의 형태로만 표기하는 것은 지식 출처를 제시한다는 인용의 본래 목적과 학생들이 인공지능을 사용하는 다양한 현실적 맥락을 충분히 반영하지 못한다. 이에 본 연구는 과제 수행 과정에서 명시해야 할 생성형 인공지능 사용의 구체적 범주를 체계화하고자, 생성형 인공지능 사용 명시 프레임워크(Generative AI Attribution Framework)를 제안한다. 대학 교육의 다양한 사용 사례마다 적용할 수 있는 명확한 표기 기준을 마련함으로써, 인공지능 사용 표기에 관한 논의를 확장시킬 것으로 기대한다.

Keywords:

Generative AI, Academic Integrity and Transparency, Generative AI Attribution, Contributor Role Taxonomy (CRediT)키워드:

생성형 인공지능, 학문적 진실성과 투명성, 생성형 인공지능 사용 명시 방법, CRediT 역할 분류1. Introduction

As Generative AI demonstrates powerful text generation and reasoning capabilities, the academic use of Generative AI tools is expanding within higher education. Meanwhile, misusing such AI tools has introduced difficulties in maintaining core principles of academic integrity and honesty[1]. Universities worldwide have created institution-level policies on Generative AI, emphasizing responsible use of AI tools for genuine intellectual growth[2].

Current discourse on attributing AI use in academia revolves around whether using AI is allowed, partially allowed, or strictly prohibited[3]. While prohibiting AI use to uphold academic integrity and foster critical thinking sounds plausible, side effects may arise. AI detection software may produce false positives that no software can detect AI use with perfect certainty, and students intentionally insert spelling or grammar errors to downgrade the quality of the writing to bypass AI detection[4].

Consequently, researchers are investigating methods for effectively integrating Generative AI in teaching and learning[5]. Among syllabi policies that allow AI tools, a central theme is transparency[3]. It emphasizes the need for acknowledging and attributing the use of AI tools to encourage responsible use of AI and to uphold academic integrity. However, those syllabus statements do not specify what constitutes AI use that needs to be declared and how it should be attributed.

This study proposes a Generative AI Attribution Framework outlining which AI use cases in assignments require attribution and which do not. We collected documented cases of students’ academic AI use from relevant literature and classified them using the Contributor Role Taxonomy[6]. Our framework aims to relieve the confusion of which AI uses require attribution and supports ongoing efforts to integrate Generative AI into education.

2. Related Works

Among many AI attribution methods, a large portion of institution- and course-level policies centers around adopting formal citation styles (e.g., APA, MLA, Chicago) as a guideline for how students should attribute the use of Generative AI in assignments. For institutional-level policies, McDonald[2] revealed that about 38% of R1 higher education institutions in the United States provide formal citation style as a method for attributing AI use.

The course-level Generative AI attribution method demonstrates a similar trend. Ali[3] examined syllabi of computing courses in the United States, and discovered that among 98 computing courses, 27.5% of syllabi required that students use informal or formal citation(e.g., APA) of Generative AI tools. The method of attributing widely varies, including acknowledging, annotating, providing informal citations, and providing formal citations. This finding suggests that the majority of Generative AI policies guide students to attribute the use of AI or use formal citation styles, without detailed instructions on what type of AI use should be declared and how the attribution statement should be written.

However, students have diverging perceptions and behaviors on declaring AI usage, and they attribute selectively. Draxler[7] revealed the AI ghostwriter effect, where users do not reveal their use of AI in writing, even though they attribute ownership of the text to AI. The primary reasons for not attributing were users’ perception of AI as ‘just a tool’ rather than the co-author, and their fear of negative social consequences of AI usage, such as their text ‘would not be valued’, or ’not much thought would have been put’ into the writing.

In the educational context, a study by Gonsalves[1] investigated reasons why students do not reveal their use of AI in assignments. The main reasons were that students feared potential grade penalties or accusations of academic misconduct, but most of all, students were uncertain about what AI usage should be declared. Students found that existing guidelines were vague, unclear, and inconsistent, which made them feel unsure of their ability to comply with the AI declaration requirements.

This behavioral mismatch is evident in a survey conducted on 4,800 ETH Zurich students[8]. When asked the question of how Generative AI should be considered in assessments, 52.3% of students responded ‘it depends’ and ‘do not know’. This indicates that a significant portion of the student body does not hold a uniform view on acceptable AI use. Further, 29.7% of students responded that the use is ‘legitimate’, which indicates that a substantial minority of the student body has already accepted and normalized these tools as a standard part of their academic workflow. Therefore, a gap exists between formal institutional policies and students’ lived academic reality. This highly motivates the need for clear guidance on the AI usage attribution method for transparency in higher education.

3. Literature Review

To establish an empirical foundation for our framework, we conducted a literature review following Paré and Kitsiou [9]. To the best of our knowledge, our work is the first to differentiate specific use cases of AI attribution. As a result, existing literature on this specific topic is scarce. Therefore, we concentrate on prior works central to the topic of AI citations and AI attribution policies in higher education, searched using the Google Scholar database. Among initial 21 resources, we selected 14 works that are most relevant to the scope of this study.

In this section, we discuss two mainstream AI attribution methods: following formal citation styles, declaring what and how AI was used as an attribution statement.

3.1 Citation Styles for AI

APA, MLA and Chicago citation styles provide guidelines on how to cite Generative AI. The overview of how each citation style classifies the output of Generative AI is described in Table 1.

The APA style treats Generative AI outputs (text, tables, images, code, etc.) as algorithmic outputs and requires citing the developer as the author[10]. Developers like OpenAI are credited as authors, with the tool’s version and URL included in references. When using AI tools, researchers should describe how they used the tool and include generated text in an appendix or supplementary material. However, because the APA defines authors as ‘people’ who contribute substantially to research, AI systems are not considered authors.

The MLA style defines Generative AI as a tool that analyzes and summarizes vast sets of information to generate outputs[11]. When citing AI-generated output, it should include AI’s name, version, generation date, URL, etc. AI is not treated as an author, and using it for sentence editing or translation should be indicated in footnotes or the main text. When using secondary sources cited by AI, the researcher must independently verify those sources and clearly indicate that they are indirectly sourced.

The Chicago style treats AI conversations as personal communication[12], and when using outputs from AI tools such as ChatGPT, the source should be cited in the main text or footnotes. However, if the URL assigned to an AI conversation is not accessible, it should not be included in the reference. Text generated from other AI tools is treated in the same way.

Citing AI-generated content as a means of attributing AI use may not align with academic purposes and functions of citation. Style guidelines on how to cite AI may be necessary for cases where the goal is to explicitly clear or draw attention to the fact that a particular text originates from a specific AI tool[13]. However, citation serves to credit the author’s contribution to information or research findings, introduce the source of information to readers, and provide additional resources[14]. It also demonstrates the significance of the research, justifies the procedures and materials used, and supports the author’s claims and arguments to present the results persuasively[15].

From the perspective of why sources are cited, simply guiding students to ‘cite’ their use of AI in coursework creates a mismatch between the purpose of citation and how students use AI. The AI-generated content differs by nature from traditional sources of knowledge, such as peer-reviewed research papers, published books, and newspapers. The content is not deterministic even with the same prompt, and has a risk of including false information, bias, and hallucinations.

Further, students use AI in a form that cannot be cited, such as improving the writing, fixing grammar mistakes, or summarizing an article. Shope[13] recognizes this distinction by proposing AI attribution practices that distinguish between intellectual tasks that require an AI usage statement or a citation. The proposed practice suggests attributing the use of AI when creating content, improving grammar and style, and citing the AI-generated content when there is a need to highlight or quote the particular output language.

3.2 Cases of Generative AI Policies on Documenting AI Use in Higher Education

Current Generative AI policies in higher education have broad themes of academic integrity, ethical use of AI, enhancing teaching and learning, information security, and transparency[16]. The theme of transparency emphasizes the importance of attributing AI use in content creation and assessment, and guidelines for citing AI-generated content[16].

Among them, we examine the two most detailed institutional Generative AI policies on declaring AI use: Monash University[17] and University of North Carolina(UNC)-Chapel Hill[18]. Monash University emphasizes that AI tools may be used more for idea brainstorming or refining writing rather than as a source of information, and in such cases, citation may not be appropriate. The university instructs students to document their use of Generative AI by identifying the AI tool, its use, its purpose, and edits or changes made to the AI content, detailed in Table 2.

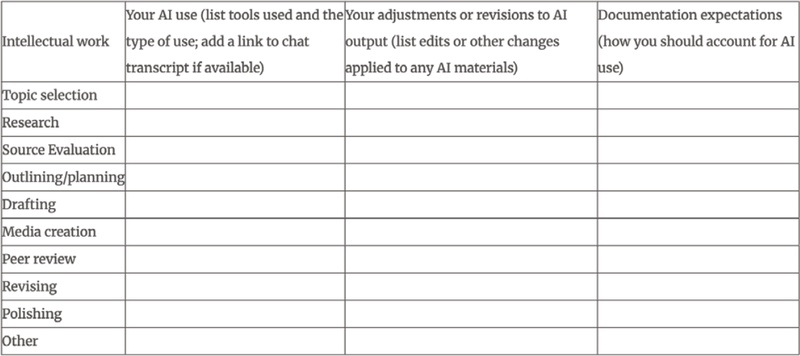

Similarly, UNC offers a Generative AI usage attribution form and encourages students to give detailed descriptions of AI usage in their projects, as shown in Fig. 1.

Although both guidelines are detailed, they do not separate what constitutes an AI use that needs or does not need declaration, and are limited to assignment-type papers and reports. As a result, these guidelines are not only limited to humanities-style assignments but also fail to cover the specific ways AI is used in computational and scientific work.

3.3 What type of AI use should be attributed?

Few works have addressed what type of AI use should be acknowledged in written works. Previously mentioned work of Draxler[7] suggests introducing and expanding the Contributor Role Taxonomy(CRediT) as a framework to account for specifying AI’s contribution in human-AI collaboration. Most notably, He et al.[19] investigates the extent of AI attribution for writing under what type and amount of contribution the AI has performed compared to human authors. They found that the degree of attribution differs by contribution effort; for instance, people consider the task of fixing spelling and grammar mistakes should receive much less attribution credit compared to the task of synthesizing information.

In addressing the use of AI in education, Krakovetskyi and Shevchenko[20] proposed a risk categorization that distinguishes between AI use cases requiring attribution and those that do not. A key aspect of their analysis is that attribution is unnecessary when AI is used for conventional tasks that do not generate new knowledge or meanings, such as machine translation or checking spelling and grammar.

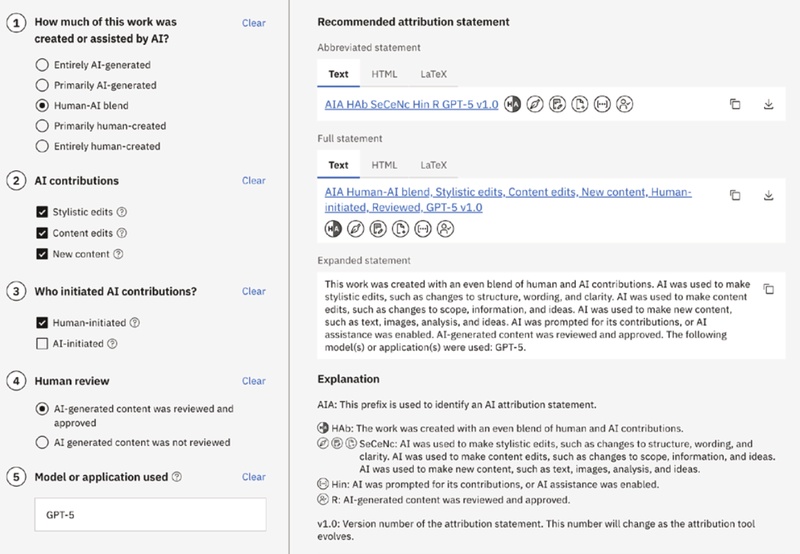

Along with this line of research, IBM formulated an AI attribution statement[18], which specifies whether and how AI was used in the final content. An example of the attribution statement is illustrated in Fig. 2. The statement includes how much and what AI contributes, and whether a human reviewed the final content. This implies the current trend of attributing AI use is focused on describing AI use rather than citing AI; attribution statements on AI use should deviate from citing AI output and move towards declaring the type and degree of AI use.

4. AI Attribution Framework

We propose an AI Attribution Framework for higher education computing courses that categorizes whether AI use in assignments should or should not be attributed.

4.1 Framework Construction

We created the AI Attribution Framework through the following process. First, we adopt CRediT taxonomy[6] to determine and classify what AI use should be attributed. Second, among 14 roles of CRediT, we excluded ‘Funding acquisition’, ‘Project administration’, ‘Resources’, and ‘Supervision’ since only humans can take on these roles. Third, we gathered diverse cases of how students are using AI from related literatures[8, 19-23]. The source and selected educational use cases of AI are shown in Table 3. Fourth, we added two new categories to our framework, ‘do not need attribution’ and ‘should not use for academic work’. Finally, we classified AI use cases of students into corresponding categories. A complete framework is presented in Table 4.

Rationale for adding two new categories to our Framework is based on existing literatures. For ‘do not need attribution’ category, we follow the APA and MLA styles[10, 11]. Both specifies that citation is unnecessary for common softwares and passive containers that do not affect the content of the work. We added ‘should not use for academic work’ category following the current Generative AI policies in higher education[16]. Most policies mention risks and privacy implications of hallucinations, misinformation and potential bias in using Generative AI[3].

Contrary to mentioned AI attribution methods, we put emphasis on AI use cases that do not require attribution to reduce student uncertainty and establish attribution boundaries. While Krakovetskyi and Shevchenko[21] proposed that attributing AI use is unnecessary when using AI does not create new knowledge or meanings, our approach is more conservative and narrow.

Our criteria are: tasks that could be completed with existing tools (e.g., search engines, translators, dictionaries, spell-checks, or calculators) do not require attribution; however, cognitively advanced tasks newly enabled by Generative AI (e.g., document summarization, tone/style transformation) fall within the attribution range. For information search, students may use AI tools for searching information and knowledge, but directly using their output in an assignment is prohibited. Instead, they should investigate the original source of the AI output and reference the original source in their assignments, which resembles using AI tools as if it is a search engine. Before Generative AI, academic works did not require acknowledging the use of dictionaries and calculators, but did require references to the sources of information, and we follow this same principle.

The AI Attribution Framework specifies the scenarios where students are required to attribute their use of these tools in the coursework, covering a wide range of academic applications. We provide a sample Generative AI policy statement that allows its use:

Students may use Generative AI for assignments in this course. Assignments must not include Generative AI output copied verbatim without modification, contain evidence without proper source attribution, or present inaccurate or unverified information. Students must understand and acknowledge that they hold full responsibility for the final content of their submission when using Generative AI. When using AI for the ‘Investigation’ role, students should directly verify the original sources suggested by the Generative AI when completing their assignments and cite those sources in formal citation style in the references. Additionally, they should describe the purpose and manner of AI use in the acknowledgments section of their assignment.

4.2 Justification of our Framework

Our AI Attribution Framework is grounded in established academic standards rather than constructed as an entirely novel or arbitrary system. Specifically, the framework aligns with the CRediT, a widely adopted standard for attributing contributorship in major academic journals and conferences. By aligning our framework in this universally recognized taxonomy, and deriving our use cases directly from existing literature, we ensure that framework remains compatible with current research practices. Consequently, the novel components of this framework represent a logical extension, that they were developed solely to address specific gaps where previous methods could not adequately capture. This approach ensures the framework captures the nuance of AI-human collaboration while maintaining structural consistency with traditional attribution standards.

While this study establishes a theoretical foundation for AI attribution in higher education, we recognize that empirical validation is required to confirm its practical efficacy. Future research will focus on the verification of the framework through expert validation and pilot implementation with focus to student usability and instructor impact. Through the follow-up study, we aim to refine the framework into an evidence based educational policy.

5. Conclusion

The rise of Generative AI in education demands new attribution methods, as existing guidelines fail to match ways students use AI and are unclear on what and how to attribute the use of AI. This study proposes the Generative AI Attribution Framework for computing courses in higher education. It builds on the CRediT taxonomy, classifying AI by its scholarly role rather than as a simple tool. The framework clarifies attribution boundaries, distinguishing between uses that must be cited, those exempt (like a calculator), and those prohibited, providing a practical guideline.

Acknowledgments

본 연구는 2026년 과학기술정보통신부 및 정보통신기획평가원의 SW중심대학사업지원을 받아 수행되었음.(2023-0-00044)

본 논문은 2025년 한국컴퓨터교육학회 동계 학술대회에서 “대학 수업 과제에서의 생성형 AI 활용 시의 출처 표기 방법 조사 분석”의 제목으로 발표된 논문을 확장한 것임.

References

-

Gonsalves, C. (2025). Addressing student non-compliance in AI use declarations: implications for academic integrity and assessment in higher education. Assessment & Evaluation in Higher Education, 50(4), 592-606.

[https://doi.org/10.1080/02602938.2024.2415654]

-

McDonald, N., Johri, A., Ali, A., & Collier, A. H. (2025). Generative artificial intelligence in higher education: Evidence from an analysis of institutional policies and guidelines. Computers in Human Behavior: Artificial Humans, 3, 100121.

[https://doi.org/10.1016/j.chbah.2025.100121]

-

Ali, A., Collier, A. H., Dewan, U., McDonald, N., & Johri, A. (2025). Analysis of generative AI policies in computing course syllabi. In Proceedings of the 56th ACM Technical Symposium on Computer Science Education V. 1 (pp. 18-24). https://dl.acm.org/doi/10.1145/3641554.3701823

[https://doi.org/10.1145/3641554.3701823]

- Al-Sibai, N. (2025, May 8). College Students Are Sprinkling Typos Into Their AI Papers on Purpose. Futurism. https://futurism.com/college-students-ai-typos

-

Wang, H., Dang, A., Wu, Z., & Mac, S. (2024). Generative AI in higher education: Seeing ChatGPT through universities' policies, resources, and guidelines. Computers and Education: Artificial Intelligence, 7, 100326.

[https://doi.org/10.1016/j.caeai.2024.100326]

-

Brand, A., Allen, L., Altman, M., Hlava, M., & Scott, J. (2015). Beyond authorship: Attribution, contribution, collaboration, and credit. Learned Publishing, 28(2). 151–155.

[https://doi.org/10.1087/20150211]

-

Draxler, F., Werner, A., Lehmann, F., Hoppe, M., Schmidt, A., Buschek, D., & Welsch, R. (2024). The AI ghostwriter effect: When users do not perceive ownership of AI-generated text but self-declare as authors. ACM Transactions on Computer-Human Interaction, 31(2), 1-40.

[https://doi.org/10.1145/3637875]

-

Balabdaoui, F., Dittmann-Domenichini, N., Grosse, H., Schlienger, C., & Kortemeyer, G. (2024). A survey on students’ use of AI at a technical university. Discover Education, 3(1), 51.

[https://doi.org/10.1007/s44217-024-00136-4]

- Paré, G., & Kitsiou, S. (2017). Chapter 9 Methods for Literature Reviews. In F. Lau & C. Kuziemsky (Eds.), Handbook of eHealth Evaluation: An Evidence-based Approach. University of Victoria. https://www.ncbi.nlm.nih.gov/books/NBK481583/

- McAdoo, T. (2024, February 23). How to cite ChatGPT. APA Style. apastyle.apa.org/blog/how-to-cite-chatgpt

- MLA Handbook. (2023, March 17). How do I cite generative AI in MLA style? MLA Style Center. style.mla.org/citing-generative-ai

- The Chicago Manual of Style. (n.d.). FQA Item. https://www.chicagomanualofstyle.org/qanda/data/faq/topics/Documentation/faq0422.html

- Shope, M. (2023). Best practices for disclosure and citation when using artificial intelligence tools. Geo. LJ Online, 112, 1. ssrn.com/abstract=4338115

- Hunter, J. (2006). The importance of citation. http://webgrinnelledu/Dean/Tutorial/EUS/IC pdf, (1204 2007).

-

Mansourizadeh, K., & Ahmad, U. K. (2011). Citation practices among non-native expert and novice scientific writers. Journal of English for Academic Purposes, 10(3), 152-161.

[https://doi.org/10.1016/j.jeap.2011.03.004]

-

Jin, Y., Yan, L., Echeverria, V., Gaševi´c, D., & Martinez-Maldonado, R. (2025). Generative AI in higher education: A global perspective of institutional adoption policies and guidelines. Computers and Education: Artificial Intelligence, 8, 100348.

[https://doi.org/10.1016/j.caeai.2024.100348]

- Monash University. (n.d.). Acknowledging the use of generative artificial intelligence. Student Academic Success. www.monash.edu/student-academic-success/ build-digital-capabilities/create-online/acknowledging-the-use-of-generative-artificial-intelligence

- The University of North Carolina at Chapel Hill. (n.d.). AI Syllabus Language. TAR Heel Writing Guide Resources. tarheelwritingguide.unc.edu/ai-syllabus-language

-

He, J., Houde, S., & Weisz, J. D. (2025). Which contributions deserve credit? perceptions of attribution in human-ai co-creation. In Proceedings of the 2025 CHI Conference on Human Factors in Computing Systems (pp. 1-18).

[https://doi.org/10.1145/3706598.3713522]

-

Krakovetskyi, O. Y., & Shevchenko, N. Y. (2024). rick analysis of generative artificial intelligence in education and research with guidelines for responsible use and role attribution. Taurida Scientific Herald. Series: Technical Sciences, (5), 50-59.

[https://doi.org/10.32782/tnv-tech.2024.5.5]

- Handa, K., Bent, D., Tamkin, A., McCain, M., Durmus, E., Stern, M., Schiraldi, M., Huang, S., Ritchie, S., Syverud, S., Jagadish, K., Vo, M., Bell, M., & Ganguli, D. (2025, April 8). Anthropic education report: How university students use Claude. Anthropic. https://www.anthropic.com/news/anthropic-education-report-how-university-students-use-claude

- Freeman, J. (2025). Student Generative AI Survey 2025. Higher Education Policy Institute: London, UK. https://www.hepi.ac.uk/reports/student-generative-ai-survey-2025

-

Smith, C., Shiekh, K., Cooreman, H., Rahman, S., Zhu, Y., Siam, M. K., ... & Fierro, G. (2024). Early adoption of generative artificial intelligence in computing education: Emergent student use cases and perspectives in 2023. In Proceedings of the 2024 on Innovation and Technology in Computer Science Education V. 1 (pp. 3-9).

[https://doi.org/10.1145/3649217.3653575]

Appendix

저자 소개

· 2018년 Bachelor of Arts in Computer Science, Macalester College

· 2019년~현재 고려대학교 컴퓨터학과 석·박통합과정수료

관심분야 : AI윤리, 공정성, 설명가능성, AI교육

woojinkim1021@korea.ac.kr

· 2019년 고려대학교 컴퓨터교육과(이학사)

· 2024년 서울대학교 AI융합교육학과(교육학석사)

· 2024년~현재 고려대학교 컴퓨터학과 박사과정

· 2020년~현재 서울시교육청 정보교사

관심분야 : 인공지능교육, 융합교육, 인과추론

eckdrnjs@korea.ac.kr

· 1988년 고려대학교 전산과학과(이학사)

· 1990년 University of Missouri-Rolla(전산학 석사)

· 1998년 University of Florida(전산정보학 박사)

· 1999년~현재 고려대학교 교수(컴퓨터학과, 컴퓨터교육과)

· 2021년~현재 고려대학교 정보창의교육연구소 소장

관심분야 : 기계학습 알고리즘, AI교육, AI활용교육

harrykim@korea.ac.kr